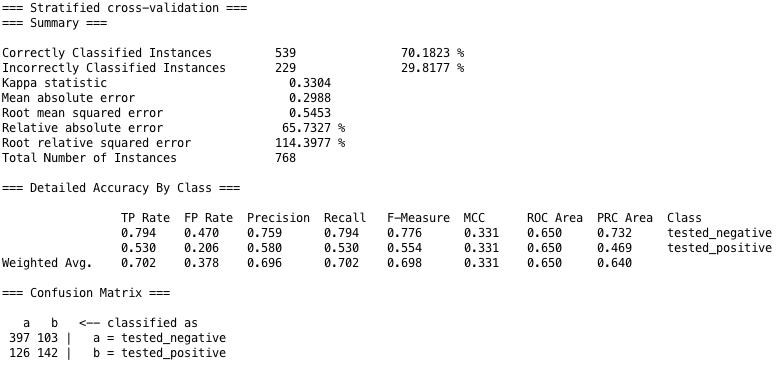

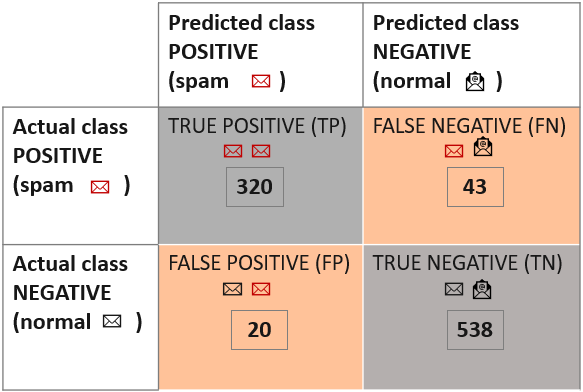

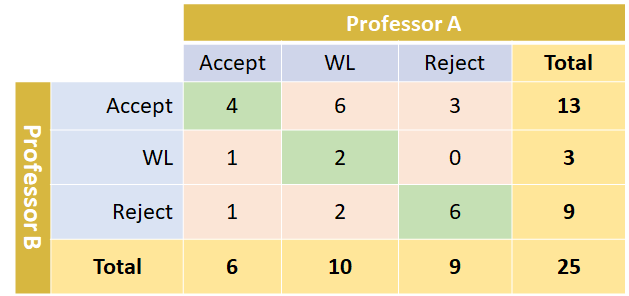

Multi-Class Metrics Made Simple, Part III: the Kappa Score (aka Cohen's Kappa Coefficient) | by Boaz Shmueli | Towards Data Science

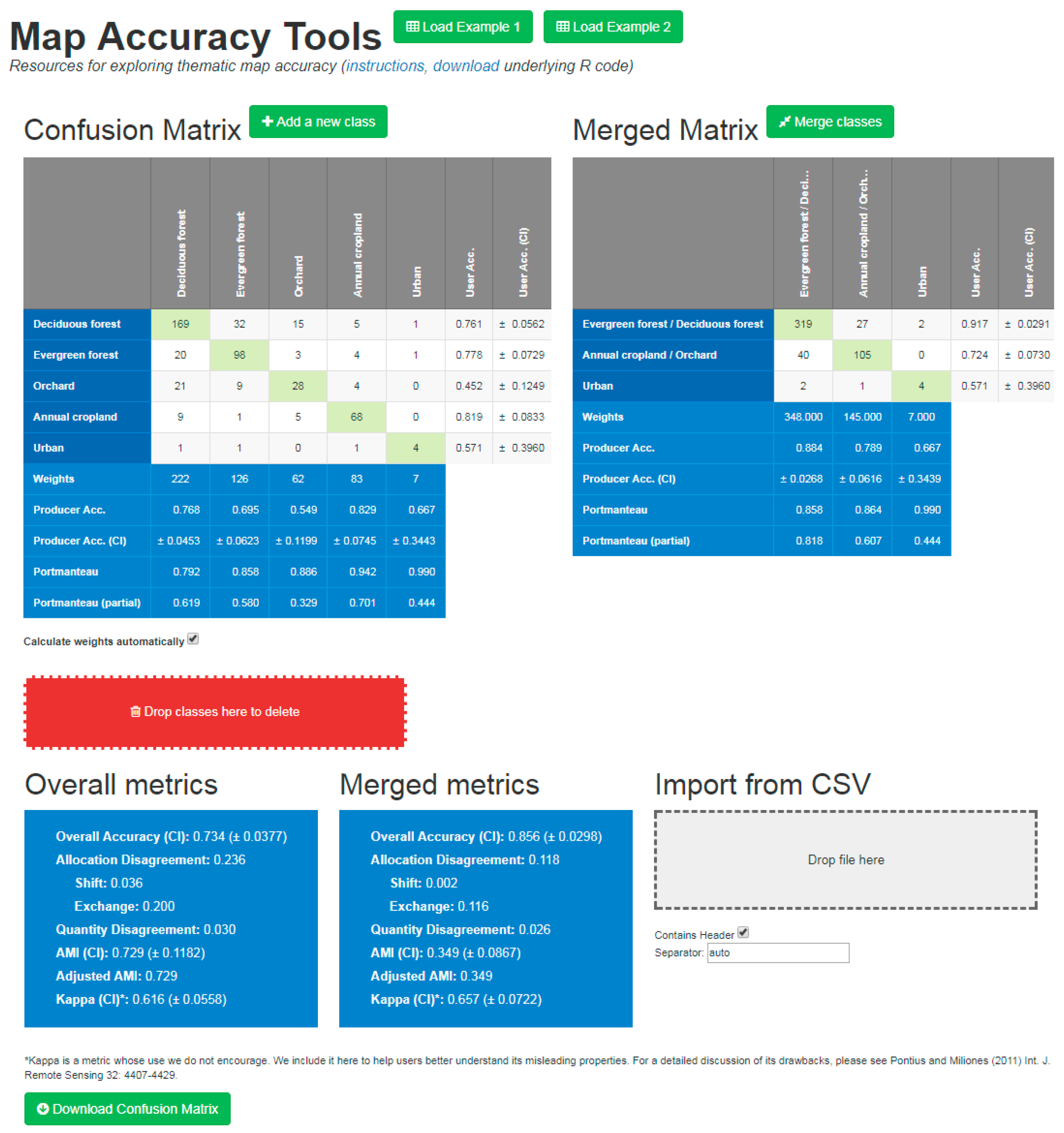

Remote Sensing | Free Full-Text | An Exploration of Some Pitfalls of Thematic Map Assessment Using the New Map Tools Resource | HTML

Cohen's Kappa: What it is, when to use it, and how to avoid its pitfalls | by Rosaria Silipo | Towards Data Science

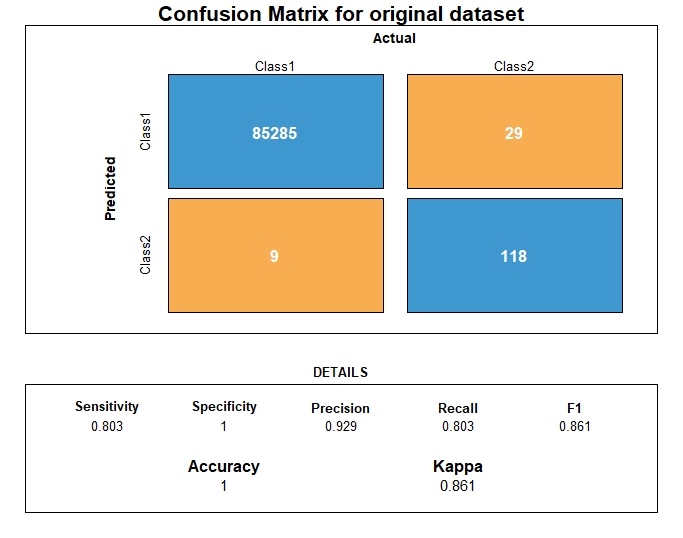

Evaluation Metrics in Machine Learning Models using Python | by Manoj Singh | Analytics Vidhya | Medium

Rizal Fathony on Twitter: "6/ Our framework supports a wide variety of non-decomposable performance metrics that can be expressed as a sum of fractions over the entities in the confusion matrix. This

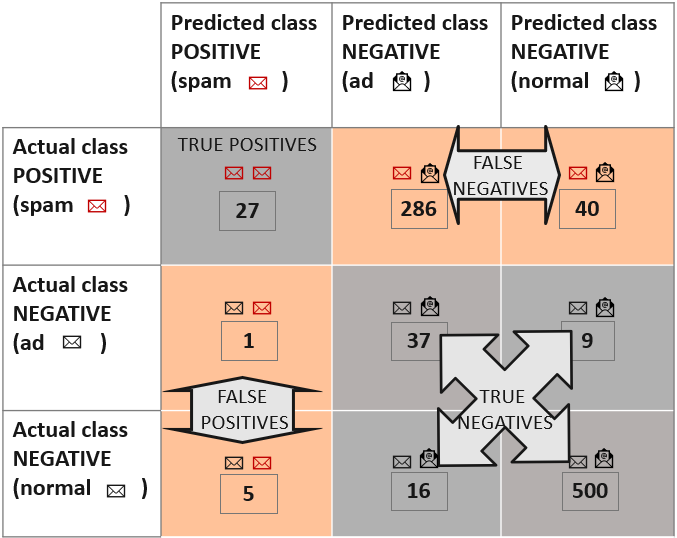

Confusion matrix and kappa coefficient for the 2002 land-use/land-cover... | Download Scientific Diagram